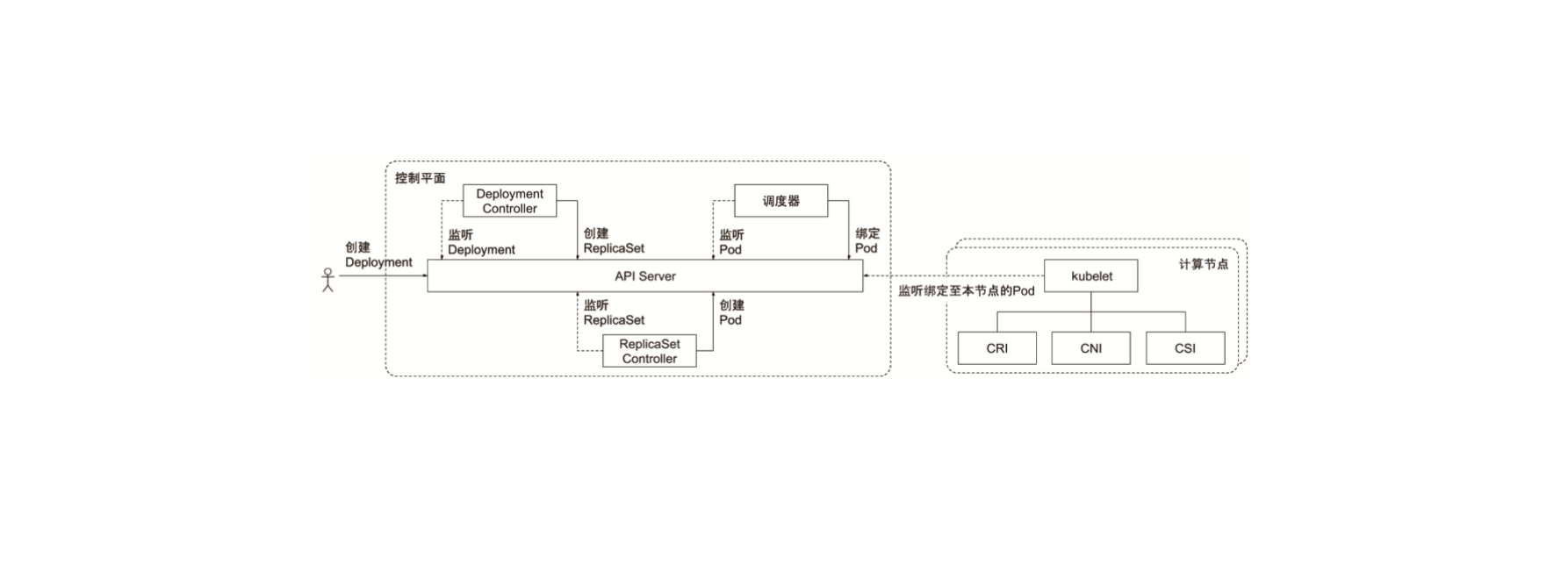

Controller Manager is the automation control center of a Kubernetes cluster, containing over 30 controllers that manage Pod-related, network-related, storage-related operations, and more. Most controllers work similarly — each controller is a control loop responsible for watching its corresponding resources through the API server, deciding on the next action based on the object’s state, and driving it toward the desired state.

- Controller Manager is the brain of the cluster and the key to keeping it running.

- Its role is to ensure Kubernetes follows the declarative system specification, keeping the system’s Actual State consistent with the user-defined Desired State.

- Controller Manager is a combination of multiple controllers. Each controller is a control loop responsible for watching its managed objects and completing configuration when objects change.

- Failed controller configurations typically trigger automatic retries. Through the controller’s continuous retry mechanism, the entire cluster ensures Eventual Consistency.

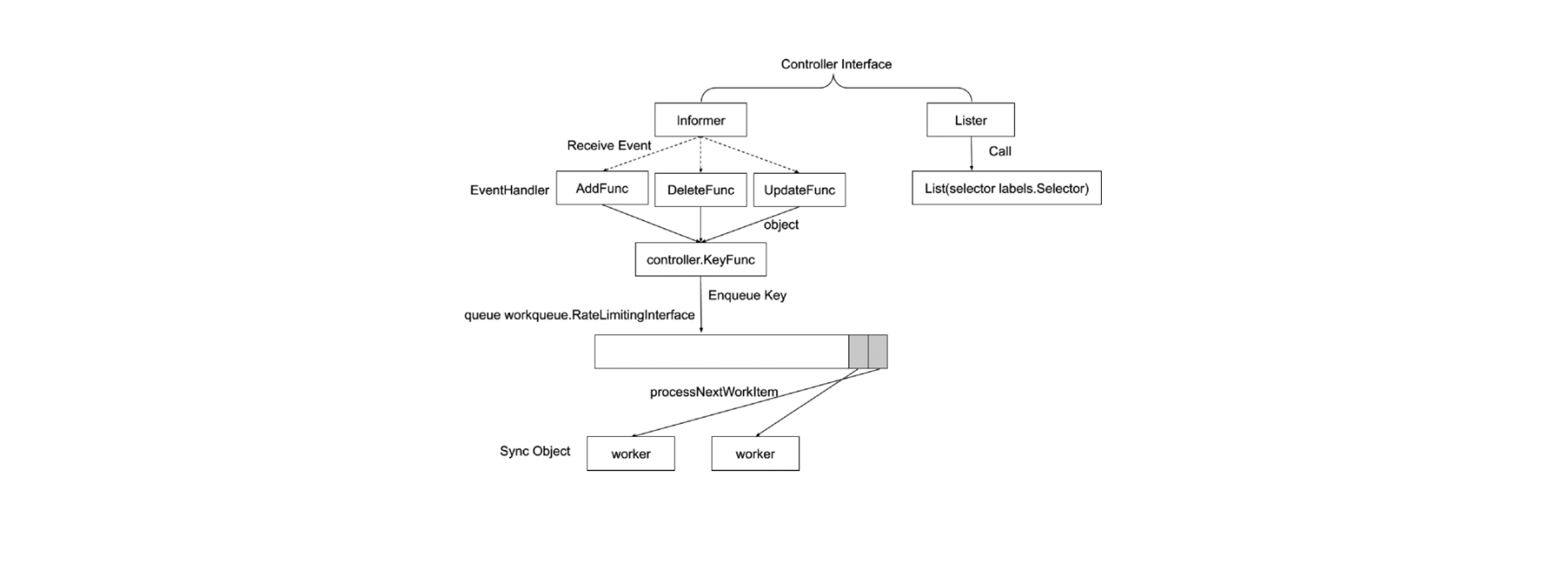

Controller Workflow

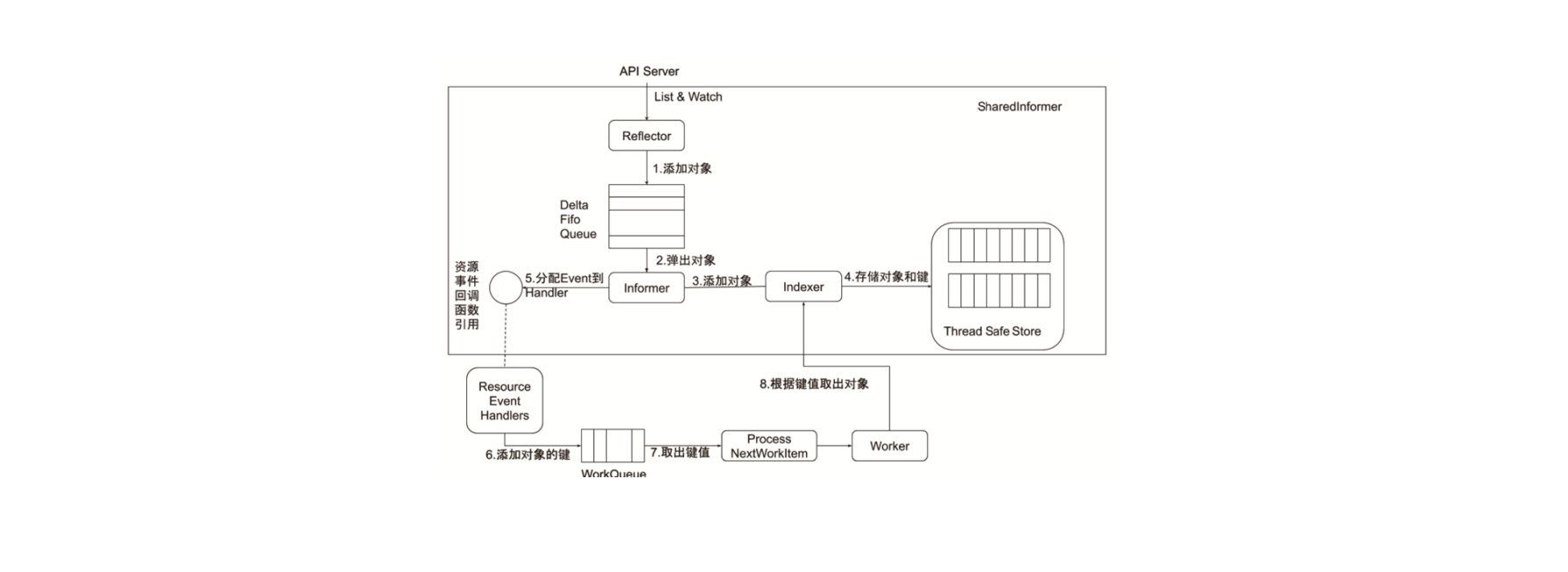

Informer Internal Mechanism

Controller Collaborative Work Principle

Common Controllers

- Required Controllers

- EndpointController

- ReplicationController

- PodGCController

- ResourceQuotaController

- NamespaceController

- ServiceAccountController

- GarbageCollectorController

- DaemonSetController

- JobController

- DeploymentController

- ReplicaSetController

- HPAController

- DisruptionController

- StatefulSetController

- CronJobController

- CSRSigningController

- CSRApprovingController

- TTLController

- Default-enabled Optional Controllers (can be toggled via options)

- TokenController

- NodeController

- ServiceController

- RouteController

- PVBinderController

- AttachDetachController

- Default-disabled Optional Controllers (can be toggled via options)

- BootstrapSignerController

- TokenCleanerController

Cloud Controller Manager

When do you need Cloud Controller Manager?

Cloud Controller Manager was separated from kube-controller-manager starting in Kubernetes 1.6, mainly because Cloud Controller Manager often needs deep integration with enterprise clouds, and its release cycle is relatively independent from Kubernetes.

Upgrading it together with Kubernetes core management components is time-consuming and labor-intensive.

Typically, Cloud Controller Manager needs:

- Authentication/Authorization: Enterprise clouds often require authentication credentials. For Kubernetes to interact with cloud APIs, it needs to obtain Service Accounts from the cloud system.

- Cloud Controller Manager itself, as a user-space component, needs proper RBAC settings in Kubernetes to obtain resource operation permissions.

- High Availability: Leader election is needed to ensure Cloud Controller Manager’s high availability.

Cloud Controller Manager was separated from the old version of the API Server.

- kube-apiserver and kube-controller-manager must NOT specify cloud-provider, otherwise the built-in cloud controller manager will be loaded.

- kubelet needs to be configured with –cloud-provider=external.

Cloud Controller Manager mainly supports:

- Node Controller: Accesses the cloud API to update node status and deletes nodes from Kubernetes after they’re deleted in the cloud.

- Route Controller: Configures routes in the cloud environment.

- Service Controller: Configures LB VIPs for LoadBalancer-type services.

Custom Cloud Controllers Needed

- Ingress Controller

- Service Controller

- Self-developed Controllers

- RBAC Controller

- Account Controller

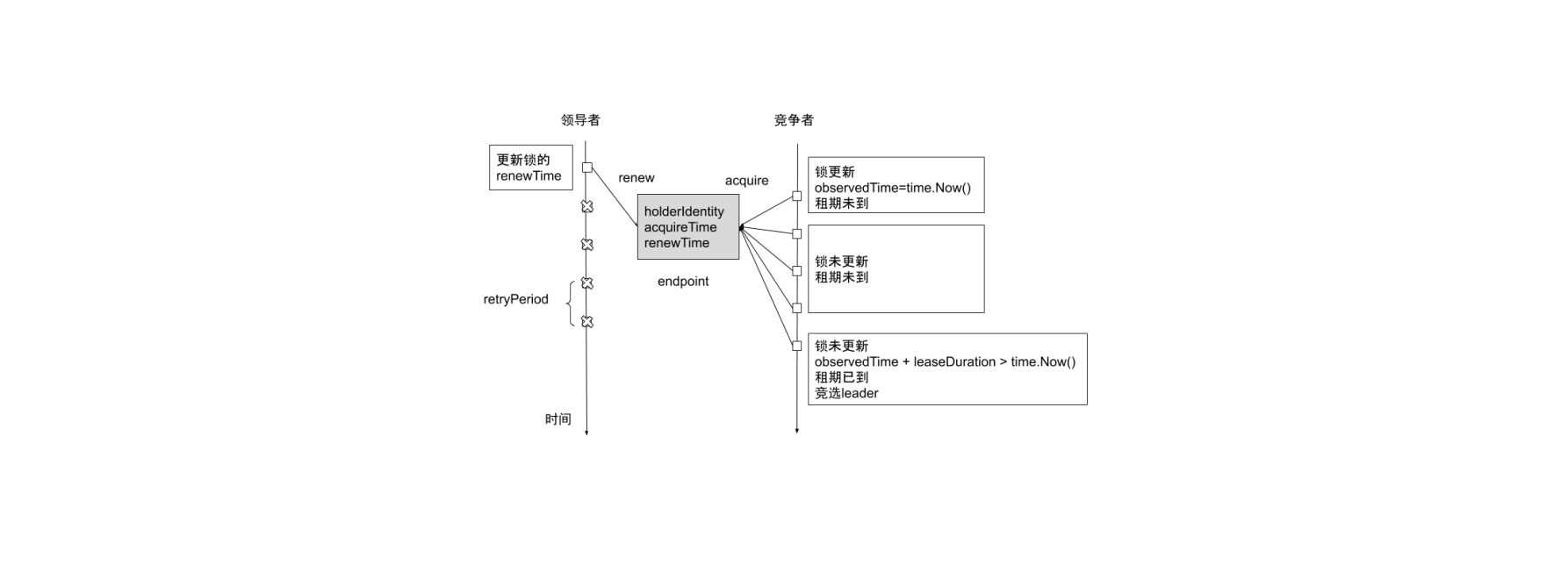

High Availability

When started with --leader-elect=true, the controller manager uses multi-node leader election to select a primary node. Only the primary node calls StartControllers() to start all controllers, while other secondary nodes only execute the election algorithm.

The multi-node leader election implementation can be found in leaderelection.go. It implements two resource locks (Endpoint or ConfigMap; both kube-controller-manager and cloud-controller-manager use Endpoint locks), determining master-slave relationships by updating the resource’s Annotation (control-plane.alpha.kubernetes.io/leader).

High Performance

Starting from Kubernetes 1.7, all calls that need to monitor resource changes are recommended to use Informer. Informer provides an event-notification-based read-only caching mechanism that allows registering callback functions for resource changes and dramatically reduces API calls.

For Informer usage examples, see here.

Node Eviction

When a node becomes abnormal, Node Controller evicts nodes at the default rate (--node-eviction-rate=0.1, i.e., one node every 10 seconds). Node Controller divides nodes into different groups by Zone and adjusts the rate based on Zone status:

- Normal: All nodes are Ready, evict at default rate.

- PartialDisruption: More than 33% of nodes are NotReady. When the abnormal node ratio exceeds

--unhealthy-zone-threshold=0.55, the rate slows:- Small clusters (node count <

--large-cluster-size-threshold=50): Stop eviction - Large clusters: Slow rate to

--secondary-node-eviction-rate=0.01

- Small clusters (node count <

- FullDisruption: All nodes are NotReady, return to default eviction rate. But when all Zones are in FullDisruption, stop eviction.

Leader Election Mechanism

Conclusion

Each controller plays its designated role, with clean separation of responsibilities between them. Well done!

Feel free to leave a comment on my blog. Your feedback motivates me to keep writing. Thank you for reading, and let’s grow together to become better versions of ourselves.